Cifar10

On this page

Cifar10#

This page gives a quick introduction to OpenPifPaf’s Cifar10 plugin that is part of openpifpaf.plugins.

It demonstrates the plugin architecture.

There already is a nice dataset for CIFAR10 in torchvision and a related PyTorch tutorial.

The plugin adds a DataModule that uses this dataset.

Let’s start with them setup for this notebook and registering all available OpenPifPaf plugins:

print(openpifpaf.plugin.REGISTERED.keys())

dict_keys(['openpifpaf.plugins.animalpose', 'openpifpaf.plugins.apollocar3d', 'openpifpaf.plugins.cifar10', 'openpifpaf.plugins.coco', 'openpifpaf.plugins.crowdpose', 'openpifpaf.plugins.nuscenes', 'openpifpaf.plugins.posetrack', 'openpifpaf.plugins.wholebody', 'openpifpaf_extras'])

Next, we configure and instantiate the Cifar10 datamodule and look at the configured head metas:

# configure

openpifpaf.plugins.cifar10.datamodule.Cifar10.debug = True

openpifpaf.plugins.cifar10.datamodule.Cifar10.batch_size = 1

# instantiate and inspect

datamodule = openpifpaf.plugins.cifar10.datamodule.Cifar10()

datamodule.set_loader_workers(0) # no multi-processing to see debug outputs in main thread

datamodule.head_metas

[CifDet(name='cifdet', dataset='cifar10', head_index=None, base_stride=None, upsample_stride=1, categories=('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck'), training_weights=None)]

We see here that CIFAR10 is being treated as a detection dataset (CifDet) and has 10 categories.

To create a network, we use the factory() function that takes the name of the base network cifar10net and the list of head metas.

net = openpifpaf.network.Factory(base_name='cifar10net').factory(head_metas=datamodule.head_metas)

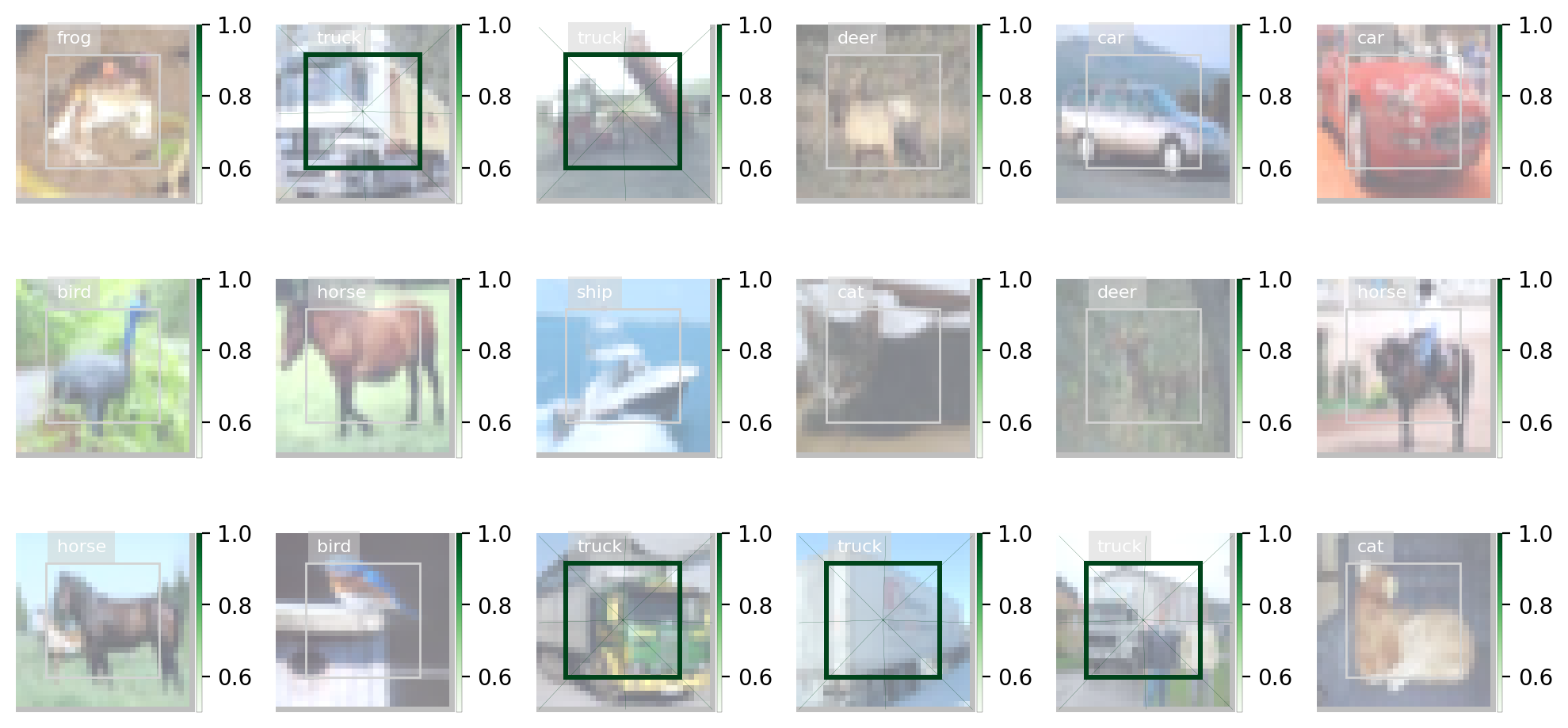

We can inspect the training data that is returned from datamodule.train_loader():

# configure visualization

openpifpaf.visualizer.Base.set_all_indices(['cifdet:9:regression']) # category 9 = truck

# Create a wrapper for a data loader that iterates over a set of matplotlib axes.

# The only purpose is to set a different matplotlib axis before each call to

# retrieve the next image from the data_loader so that it produces multiple

# debug images in one canvas side-by-side.

def loop_over_axes(axes, data_loader):

previous_common_ax = openpifpaf.visualizer.Base.common_ax

train_loader_iter = iter(data_loader)

for ax in axes.reshape(-1):

openpifpaf.visualizer.Base.common_ax = ax

yield next(train_loader_iter, None)

openpifpaf.visualizer.Base.common_ax = previous_common_ax

# create a canvas and loop over the first few entries in the training data

with openpifpaf.show.canvas(ncols=6, nrows=3, figsize=(10, 5)) as axs:

for images, targets, meta in loop_over_axes(axs, datamodule.train_loader()):

pass

Training#

We train a very small network, cifar10net, for only one epoch. Afterwards, we will investigate its predictions.

%%bash

python -m openpifpaf.train \

--dataset=cifar10 --basenet=cifar10net --log-interval=50 \

--epochs=3 --lr=0.0003 --momentum=0.95 --batch-size=16 \

--lr-warm-up-epochs=0.1 --lr-decay 2.0 2.5 --lr-decay-epochs=0.1 \

--loader-workers=2 --output=cifar10_tutorial.pkl

INFO:__main__:neural network device: cpu (CUDA available: False, count: 0)

INFO:openpifpaf.network.basenetworks:cifar10net: stride = 16, output features = 128

INFO:openpifpaf.network.losses.multi_head:multihead loss: ['cifar10.cifdet.c', 'cifar10.cifdet.vec'], [1.0, 1.0]

INFO:openpifpaf.logger:{'type': 'process', 'argv': ['/opt/hostedtoolcache/Python/3.8.16/x64/lib/python3.8/site-packages/openpifpaf/train.py', '--dataset=cifar10', '--basenet=cifar10net', '--log-interval=50', '--epochs=3', '--lr=0.0003', '--momentum=0.95', '--batch-size=16', '--lr-warm-up-epochs=0.1', '--lr-decay', '2.0', '2.5', '--lr-decay-epochs=0.1', '--loader-workers=2', '--output=cifar10_tutorial.pkl'], 'args': {'output': 'cifar10_tutorial.pkl', 'disable_cuda': False, 'ddp': False, 'local_rank': None, 'sync_batchnorm': True, 'quiet': False, 'debug': False, 'log_stats': False, 'resnet_pretrained': True, 'resnet_pool0_stride': 0, 'resnet_input_conv_stride': 2, 'resnet_input_conv2_stride': 0, 'resnet_block5_dilation': 1, 'resnet_remove_last_block': False, 'shufflenetv2k_input_conv2_stride': 0, 'shufflenetv2k_input_conv2_outchannels': None, 'shufflenetv2k_stage4_dilation': 1, 'shufflenetv2k_kernel': 5, 'shufflenetv2k_conv5_as_stage': False, 'shufflenetv2k_instance_norm': False, 'shufflenetv2k_group_norm': False, 'shufflenetv2k_leaky_relu': False, 'mobilenetv2_pretrained': True, 'swin_drop_path_rate': 0.2, 'swin_input_upsample': False, 'swin_use_fpn': False, 'swin_fpn_out_channels': None, 'swin_fpn_level': 3, 'swin_pretrained': True, 'xcit_out_channels': None, 'xcit_out_maxpool': False, 'xcit_pretrained': True, 'mobilenetv3_pretrained': True, 'shufflenetv2_pretrained': True, 'cf4_dropout': 0.0, 'cf4_inplace_ops': True, 'checkpoint': None, 'basenet': 'cifar10net', 'cross_talk': 0.0, 'download_progress': True, 'head_consolidation': 'filter_and_extend', 'lambdas': None, 'component_lambdas': None, 'auto_tune_mtl': False, 'auto_tune_mtl_variance': False, 'task_sparsity_weight': 0.0, 'focal_alpha': 0.5, 'focal_gamma': 1.0, 'bce_soft_clamp': 5.0, 'bce_background_clamp': -15.0, 'regression_soft_clamp': 5.0, 'b_scale': 1.0, 'scale_log': False, 'scale_soft_clamp': 5.0, 'epochs': 3, 'train_batches': None, 'val_batches': None, 'clip_grad_norm': 0.0, 'clip_grad_value': 0.0, 'log_interval': 50, 'val_interval': 1, 'stride_apply': 1, 'fix_batch_norm': False, 'ema': 0.01, 'profile': None, 'cif_side_length': 4, 'caf_min_size': 3, 'caf_fixed_size': False, 'caf_aspect_ratio': 0.0, 'encoder_suppress_selfhidden': True, 'encoder_suppress_invisible': False, 'encoder_suppress_collision': False, 'momentum': 0.95, 'beta2': 0.999, 'adam_eps': 1e-06, 'nesterov': True, 'weight_decay': 0.0, 'adam': False, 'amsgrad': False, 'lr': 0.0003, 'lr_decay': [2.0, 2.5], 'lr_decay_factor': 0.1, 'lr_decay_epochs': 0.1, 'lr_warm_up_start_epoch': 0, 'lr_warm_up_epochs': 0.1, 'lr_warm_up_factor': 0.001, 'lr_warm_restarts': [], 'lr_warm_restart_duration': 0.5, 'dataset': 'cifar10', 'loader_workers': 2, 'batch_size': 16, 'dataset_weights': None, 'animal_train_annotations': 'data-animalpose/annotations/animal_keypoints_20_train.json', 'animal_val_annotations': 'data-animalpose/annotations/animal_keypoints_20_val.json', 'animal_train_image_dir': 'data-animalpose/images/train/', 'animal_val_image_dir': 'data-animalpose/images/val/', 'animal_square_edge': 513, 'animal_extended_scale': False, 'animal_orientation_invariant': 0.0, 'animal_blur': 0.0, 'animal_augmentation': True, 'animal_rescale_images': 1.0, 'animal_upsample': 1, 'animal_min_kp_anns': 1, 'animal_bmin': 1, 'animal_eval_test2017': False, 'animal_eval_testdev2017': False, 'animal_eval_annotation_filter': True, 'animal_eval_long_edge': 0, 'animal_eval_extended_scale': False, 'animal_eval_orientation_invariant': 0.0, 'apollo_train_annotations': 'data-apollocar3d/annotations/apollo_keypoints_66_train.json', 'apollo_val_annotations': 'data-apollocar3d/annotations/apollo_keypoints_66_val.json', 'apollo_train_image_dir': 'data-apollocar3d/images/train/', 'apollo_val_image_dir': 'data-apollocar3d/images/val/', 'apollo_square_edge': 513, 'apollo_extended_scale': False, 'apollo_orientation_invariant': 0.0, 'apollo_blur': 0.0, 'apollo_augmentation': True, 'apollo_rescale_images': 1.0, 'apollo_upsample': 1, 'apollo_min_kp_anns': 1, 'apollo_bmin': 1, 'apollo_apply_local_centrality': False, 'apollo_eval_annotation_filter': True, 'apollo_eval_long_edge': 0, 'apollo_eval_extended_scale': False, 'apollo_eval_orientation_invariant': 0.0, 'apollo_use_24_kps': False, 'cifar10_root_dir': 'data-cifar10/', 'cifar10_download': False, 'cocodet_train_annotations': 'data-mscoco/annotations/instances_train2017.json', 'cocodet_val_annotations': 'data-mscoco/annotations/instances_val2017.json', 'cocodet_train_image_dir': 'data-mscoco/images/train2017/', 'cocodet_val_image_dir': 'data-mscoco/images/val2017/', 'cocodet_square_edge': 513, 'cocodet_extended_scale': False, 'cocodet_orientation_invariant': 0.0, 'cocodet_blur': 0.0, 'cocodet_augmentation': True, 'cocodet_rescale_images': 1.0, 'cocodet_upsample': 1, 'cocokp_train_annotations': 'data-mscoco/annotations/person_keypoints_train2017.json', 'cocokp_val_annotations': 'data-mscoco/annotations/person_keypoints_val2017.json', 'cocokp_train_image_dir': 'data-mscoco/images/train2017/', 'cocokp_val_image_dir': 'data-mscoco/images/val2017/', 'cocokp_square_edge': 385, 'cocokp_with_dense': False, 'cocokp_extended_scale': False, 'cocokp_orientation_invariant': 0.0, 'cocokp_blur': 0.0, 'cocokp_augmentation': True, 'cocokp_rescale_images': 1.0, 'cocokp_upsample': 1, 'cocokp_min_kp_anns': 1, 'cocokp_bmin': 0.1, 'cocokp_eval_test2017': False, 'cocokp_eval_testdev2017': False, 'coco_eval_annotation_filter': True, 'coco_eval_long_edge': 641, 'coco_eval_extended_scale': False, 'coco_eval_orientation_invariant': 0.0, 'crowdpose_train_annotations': 'data-crowdpose/json/crowdpose_train.json', 'crowdpose_val_annotations': 'data-crowdpose/json/crowdpose_val.json', 'crowdpose_image_dir': 'data-crowdpose/images/', 'crowdpose_square_edge': 385, 'crowdpose_extended_scale': False, 'crowdpose_orientation_invariant': 0.0, 'crowdpose_augmentation': True, 'crowdpose_rescale_images': 1.0, 'crowdpose_upsample': 1, 'crowdpose_min_kp_anns': 1, 'crowdpose_eval_test': False, 'crowdpose_eval_long_edge': 641, 'crowdpose_eval_extended_scale': False, 'crowdpose_eval_orientation_invariant': 0.0, 'crowdpose_index': None, 'nuscenes_train_annotations': '../../../NuScenes/mscoco_style_annotations/nuimages_v1.0-train.json', 'nuscenes_val_annotations': '../../../NuScenes/mscoco_style_annotations/nuimages_v1.0-val.json', 'nuscenes_train_image_dir': '../../../NuScenes/nuimages-v1.0-all-samples', 'nuscenes_val_image_dir': '../../../NuScenes/nuimages-v1.0-all-samples', 'nuscenes_square_edge': 513, 'nuscenes_extended_scale': False, 'nuscenes_orientation_invariant': 0.0, 'nuscenes_blur': 0.0, 'nuscenes_augmentation': True, 'nuscenes_rescale_images': 1.0, 'nuscenes_upsample': 1, 'posetrack2018_train_annotations': 'data-posetrack2018/annotations/train/*.json', 'posetrack2018_val_annotations': 'data-posetrack2018/annotations/val/*.json', 'posetrack2018_eval_annotations': 'data-posetrack2018/annotations/val/*.json', 'posetrack2018_data_root': 'data-posetrack2018', 'posetrack_square_edge': 385, 'posetrack_with_dense': False, 'posetrack_augmentation': True, 'posetrack_rescale_images': 1.0, 'posetrack_upsample': 1, 'posetrack_min_kp_anns': 1, 'posetrack_bmin': 0.1, 'posetrack_sample_pairing': 0.0, 'posetrack_image_augmentations': 0.0, 'posetrack_max_shift': 30.0, 'posetrack_eval_long_edge': 801, 'posetrack_eval_extended_scale': False, 'posetrack_eval_orientation_invariant': 0.0, 'posetrack_ablation_without_tcaf': False, 'posetrack2017_eval_annotations': 'data-posetrack2017/annotations/val/*.json', 'posetrack2017_data_root': 'data-posetrack2017', 'cocokpst_max_shift': 30.0, 'wholebody_train_annotations': 'data-mscoco/annotations/person_keypoints_train2017_wholebody_pifpaf_style.json', 'wholebody_val_annotations': 'data-mscoco/annotations/coco_wholebody_val_v1.0.json', 'wholebody_train_image_dir': 'data-mscoco/images/train2017/', 'wholebody_val_image_dir': 'data-mscoco/images/val2017', 'wholebody_square_edge': 385, 'wholebody_extended_scale': False, 'wholebody_orientation_invariant': 0.0, 'wholebody_blur': 0.0, 'wholebody_augmentation': True, 'wholebody_rescale_images': 1.0, 'wholebody_upsample': 1, 'wholebody_min_kp_anns': 1, 'wholebody_bmin': 1.0, 'wholebody_apply_local_centrality': False, 'wholebody_eval_test2017': False, 'wholebody_eval_testdev2017': False, 'wholebody_eval_annotation_filter': True, 'wholebody_eval_long_edge': 641, 'wholebody_eval_extended_scale': False, 'wholebody_eval_orientation_invariant': 0.0, 'save_all': None, 'show': False, 'image_width': None, 'image_height': None, 'image_dpi_factor': 2.0, 'image_min_dpi': 50.0, 'show_file_extension': 'jpeg', 'textbox_alpha': 0.5, 'text_color': 'white', 'font_size': 8, 'monocolor_connections': False, 'line_width': None, 'skeleton_solid_threshold': 0.5, 'show_box': False, 'white_overlay': False, 'show_joint_scales': False, 'show_joint_confidences': False, 'show_decoding_order': False, 'show_frontier_order': False, 'show_only_decoded_connections': False, 'video_fps': 10, 'video_dpi': 100, 'debug_indices': [], 'device': device(type='cpu'), 'pin_memory': False}, 'version': '0.13.11', 'plugin_versions': {'openpifpaf_extras': '0.0.3'}, 'hostname': 'fv-az576-186'}

INFO:openpifpaf.optimize:SGD optimizer

INFO:openpifpaf.optimize:training batches per epoch = 3125

INFO:openpifpaf.network.trainer:{'type': 'config', 'field_names': ['cifar10.cifdet.c', 'cifar10.cifdet.vec']}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch000

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 0, 'n_batches': 3125, 'time': 0.063, 'data_time': 0.104, 'lr': 3e-07, 'loss': 68.479, 'head_losses': [1.965, 66.514]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 50, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.002, 'lr': 9.1e-07, 'loss': 68.305, 'head_losses': [2.003, 66.302]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 100, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 2.74e-06, 'loss': 68.265, 'head_losses': [1.975, 66.29]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 150, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.002, 'lr': 8.26e-06, 'loss': 68.034, 'head_losses': [2.006, 66.027]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 200, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.002, 'lr': 2.495e-05, 'loss': 67.571, 'head_losses': [2.024, 65.547]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 250, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 7.536e-05, 'loss': 66.389, 'head_losses': [2.028, 64.361]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 300, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.003, 'lr': 0.00022757, 'loss': 55.563, 'head_losses': [7.007, 48.555]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 350, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': 27.776, 'head_losses': [-0.441, 28.218]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 400, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': 11.966, 'head_losses': [-5.503, 17.47]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 450, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -3.442, 'head_losses': [-7.861, 4.419]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 500, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -5.929, 'head_losses': [-8.492, 2.563]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 550, 'n_batches': 3125, 'time': 0.04, 'data_time': 0.004, 'lr': 0.0003, 'loss': -1.603, 'head_losses': [-8.597, 6.994]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 600, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.003, 'lr': 0.0003, 'loss': -5.596, 'head_losses': [-8.743, 3.147]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 650, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.002, 'lr': 0.0003, 'loss': -7.535, 'head_losses': [-9.092, 1.558]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 700, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -7.992, 'head_losses': [-9.171, 1.179]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 750, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.69, 'head_losses': [-9.153, 0.463]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 800, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.003, 'lr': 0.0003, 'loss': -3.968, 'head_losses': [-9.131, 5.163]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 850, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.003, 'lr': 0.0003, 'loss': -8.664, 'head_losses': [-8.957, 0.293]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 900, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -7.916, 'head_losses': [-9.022, 1.107]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 950, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': 1.644, 'head_losses': [-9.284, 10.928]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1000, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -6.126, 'head_losses': [-9.108, 2.983]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1050, 'n_batches': 3125, 'time': 0.037, 'data_time': 0.003, 'lr': 0.0003, 'loss': -8.705, 'head_losses': [-9.337, 0.632]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1100, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.085, 'head_losses': [-9.369, 0.285]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1150, 'n_batches': 3125, 'time': 0.042, 'data_time': 0.004, 'lr': 0.0003, 'loss': -8.593, 'head_losses': [-9.548, 0.955]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1200, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.028, 'head_losses': [-9.411, 1.383]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1250, 'n_batches': 3125, 'time': 0.023, 'data_time': 0.012, 'lr': 0.0003, 'loss': -9.243, 'head_losses': [-9.513, 0.27]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1300, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.849, 'head_losses': [-9.193, 0.345]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1350, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.769, 'head_losses': [-9.393, 0.624]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1400, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.005, 'lr': 0.0003, 'loss': -8.921, 'head_losses': [-9.054, 0.133]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1450, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.491, 'head_losses': [-9.405, -0.086]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1500, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.004, 'lr': 0.0003, 'loss': -8.842, 'head_losses': [-9.599, 0.757]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1550, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.003, 'lr': 0.0003, 'loss': -8.56, 'head_losses': [-9.521, 0.961]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1600, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.085, 'head_losses': [-9.326, 0.241]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1650, 'n_batches': 3125, 'time': 0.039, 'data_time': 0.008, 'lr': 0.0003, 'loss': -9.0, 'head_losses': [-9.359, 0.358]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1700, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.682, 'head_losses': [-9.681, -0.001]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1750, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.424, 'head_losses': [-9.483, 0.059]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1800, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.092, 'head_losses': [-9.315, 0.224]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1850, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.006, 'lr': 0.0003, 'loss': -8.798, 'head_losses': [-9.585, 0.787]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1900, 'n_batches': 3125, 'time': 0.04, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.443, 'head_losses': [-9.502, 0.06]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1950, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.452, 'head_losses': [-9.655, 1.203]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2000, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.004, 'lr': 0.0003, 'loss': -9.347, 'head_losses': [-9.381, 0.034]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2050, 'n_batches': 3125, 'time': 0.024, 'data_time': 0.009, 'lr': 0.0003, 'loss': -9.553, 'head_losses': [-9.455, -0.098]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2100, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.46, 'head_losses': [-9.323, -0.137]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2150, 'n_batches': 3125, 'time': 0.037, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.763, 'head_losses': [-9.745, -0.018]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2200, 'n_batches': 3125, 'time': 0.039, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.582, 'head_losses': [-9.449, -0.133]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2250, 'n_batches': 3125, 'time': 0.025, 'data_time': 0.006, 'lr': 0.0003, 'loss': -9.638, 'head_losses': [-9.498, -0.14]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2300, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.006, 'lr': 0.0003, 'loss': -9.286, 'head_losses': [-9.544, 0.258]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2350, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.609, 'head_losses': [-9.444, -0.165]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2400, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.231, 'head_losses': [-9.387, 0.156]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2450, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.597, 'head_losses': [-9.426, -0.171]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2500, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.761, 'head_losses': [-9.598, -0.163]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2550, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.203, 'head_losses': [-9.41, 0.207]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2600, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.503, 'head_losses': [-9.409, -0.094]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2650, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.004, 'lr': 0.0003, 'loss': -9.69, 'head_losses': [-9.533, -0.157]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2700, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.683, 'head_losses': [-9.521, -0.162]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2750, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.46, 'head_losses': [-9.407, -0.053]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2800, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.809, 'head_losses': [-9.613, 0.804]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2850, 'n_batches': 3125, 'time': 0.04, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.474, 'head_losses': [-9.663, 0.188]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2900, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.659, 'head_losses': [-9.696, 0.037]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2950, 'n_batches': 3125, 'time': 0.025, 'data_time': 0.006, 'lr': 0.0003, 'loss': -8.486, 'head_losses': [-9.356, 0.87]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 3000, 'n_batches': 3125, 'time': 0.025, 'data_time': 0.006, 'lr': 0.0003, 'loss': -9.73, 'head_losses': [-10.007, 0.277]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 3050, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.571, 'head_losses': [-10.062, 1.491]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 3100, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.002, 'lr': 0.0003, 'loss': -7.831, 'head_losses': [-9.521, 1.69]}

INFO:openpifpaf.network.trainer:applying ema

INFO:openpifpaf.network.trainer:{'type': 'train-epoch', 'epoch': 1, 'loss': 0.11462, 'head_losses': [-7.92235, 8.03698], 'time': 110.1, 'n_clipped_grad': 0, 'max_norm': 0.0}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch001

INFO:openpifpaf.network.trainer:{'type': 'val-epoch', 'epoch': 1, 'loss': -9.92763, 'head_losses': [-9.82254, -0.10508], 'time': 16.9}

INFO:openpifpaf.network.trainer:restoring params from before ema

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 0, 'n_batches': 3125, 'time': 0.04, 'data_time': 0.081, 'lr': 0.0003, 'loss': -9.361, 'head_losses': [-9.932, 0.57]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 50, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.988, 'head_losses': [-10.16, 0.172]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 100, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.619, 'head_losses': [-10.096, 0.476]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 150, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.006, 'lr': 0.0003, 'loss': -9.306, 'head_losses': [-9.831, 0.525]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 200, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.635, 'head_losses': [-9.776, 0.141]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 250, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.627, 'head_losses': [-9.959, 0.331]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 300, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.004, 'lr': 0.0003, 'loss': -9.201, 'head_losses': [-9.55, 0.349]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 350, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.613, 'head_losses': [-9.888, 0.275]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 400, 'n_batches': 3125, 'time': 0.037, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.671, 'head_losses': [-10.003, 0.332]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 450, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.003, 'lr': 0.0003, 'loss': -8.353, 'head_losses': [-9.67, 1.318]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 500, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.799, 'head_losses': [-9.744, -0.055]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 550, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.004, 'lr': 0.0003, 'loss': -9.976, 'head_losses': [-10.103, 0.127]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 600, 'n_batches': 3125, 'time': 0.038, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.95, 'head_losses': [-10.148, 0.198]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 650, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.702, 'head_losses': [-10.003, 0.302]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 700, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.157, 'head_losses': [-10.115, -0.042]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 750, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.824, 'head_losses': [-10.06, 0.236]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 800, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.005, 'lr': 0.0003, 'loss': -10.051, 'head_losses': [-10.394, 0.343]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 850, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.699, 'head_losses': [-10.121, 0.422]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 900, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.009, 'lr': 0.0003, 'loss': -9.431, 'head_losses': [-9.93, 0.499]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 950, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.674, 'head_losses': [-9.825, 0.151]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1000, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.378, 'head_losses': [-10.308, 0.93]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1050, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.005, 'lr': 0.0003, 'loss': -10.048, 'head_losses': [-10.096, 0.048]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1100, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.543, 'head_losses': [-9.657, 0.114]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1150, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.006, 'lr': 0.0003, 'loss': -10.01, 'head_losses': [-10.163, 0.153]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1200, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.931, 'head_losses': [-9.774, 0.843]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1250, 'n_batches': 3125, 'time': 0.038, 'data_time': 0.004, 'lr': 0.0003, 'loss': -7.883, 'head_losses': [-10.23, 2.347]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1300, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.704, 'head_losses': [-10.11, 0.406]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1350, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.735, 'head_losses': [-10.099, 0.365]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1400, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.192, 'head_losses': [-9.562, 0.37]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1450, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.003, 'lr': 0.0003, 'loss': -9.574, 'head_losses': [-10.027, 0.453]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1500, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.005, 'lr': 0.0003, 'loss': -10.033, 'head_losses': [-10.319, 0.287]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1550, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.613, 'head_losses': [-10.045, 0.432]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1600, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.56, 'head_losses': [-10.147, 0.587]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1650, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.064, 'head_losses': [-9.835, 0.771]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1700, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.913, 'head_losses': [-10.05, 0.138]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1750, 'n_batches': 3125, 'time': 0.026, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.887, 'head_losses': [-10.203, 0.317]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1800, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.457, 'head_losses': [-10.223, 0.766]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1850, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.004, 'lr': 0.0003, 'loss': -8.558, 'head_losses': [-9.225, 0.667]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1900, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.6, 'head_losses': [-10.187, 0.587]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1950, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.799, 'head_losses': [-10.285, 0.486]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2000, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.005, 'lr': 0.0003, 'loss': -10.161, 'head_losses': [-10.293, 0.133]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2050, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.732, 'head_losses': [-10.377, 0.646]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2100, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.29, 'head_losses': [-10.344, 0.054]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2150, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.004, 'lr': 0.0003, 'loss': -7.314, 'head_losses': [-10.196, 2.882]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2200, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.006, 'lr': 0.0003, 'loss': -10.122, 'head_losses': [-10.36, 0.238]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2250, 'n_batches': 3125, 'time': 0.044, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.007, 'head_losses': [-10.493, 0.486]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2300, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.913, 'head_losses': [-10.414, 0.5]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2350, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.916, 'head_losses': [-10.208, 0.292]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2400, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.187, 'head_losses': [-10.588, 0.401]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2450, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.525, 'head_losses': [-9.951, 0.426]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2500, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.613, 'head_losses': [-10.203, 0.59]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2550, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.006, 'lr': 0.0003, 'loss': -9.85, 'head_losses': [-10.147, 0.298]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2600, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.239, 'head_losses': [-9.939, 0.7]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2650, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.208, 'head_losses': [-10.63, 0.422]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2700, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.19, 'head_losses': [-10.489, 0.299]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2750, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.005, 'lr': 0.0003, 'loss': -10.096, 'head_losses': [-10.209, 0.113]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2800, 'n_batches': 3125, 'time': 0.04, 'data_time': 0.004, 'lr': 0.0003, 'loss': -10.469, 'head_losses': [-10.61, 0.141]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2850, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.75, 'head_losses': [-10.773, 0.024]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2900, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.004, 'lr': 0.0003, 'loss': -10.542, 'head_losses': [-10.797, 0.255]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2950, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.003, 'lr': 0.0003, 'loss': -10.126, 'head_losses': [-10.637, 0.51]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 3000, 'n_batches': 3125, 'time': 0.039, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.971, 'head_losses': [-10.338, 1.367]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 3050, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.006, 'lr': 0.0003, 'loss': -10.279, 'head_losses': [-10.327, 0.048]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 3100, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.002, 'lr': 0.0003, 'loss': -6.325, 'head_losses': [-10.317, 3.992]}

INFO:openpifpaf.network.trainer:applying ema

INFO:openpifpaf.network.trainer:{'type': 'train-epoch', 'epoch': 2, 'loss': -9.63679, 'head_losses': [-10.09738, 0.46059], 'time': 110.7, 'n_clipped_grad': 0, 'max_norm': 0.0}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch002

INFO:openpifpaf.network.trainer:{'type': 'val-epoch', 'epoch': 2, 'loss': -10.59119, 'head_losses': [-10.46299, -0.1282], 'time': 16.1}

INFO:openpifpaf.network.trainer:restoring params from before ema

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 0, 'n_batches': 3125, 'time': 0.04, 'data_time': 0.083, 'lr': 0.0003, 'loss': -10.46, 'head_losses': [-10.637, 0.177]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 50, 'n_batches': 3125, 'time': 0.039, 'data_time': 0.003, 'lr': 0.00020755, 'loss': -10.423, 'head_losses': [-10.363, -0.06]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 100, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 0.00014359, 'loss': -9.55, 'head_losses': [-9.484, -0.066]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 150, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 9.934e-05, 'loss': -10.623, 'head_losses': [-10.489, -0.134]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 200, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 6.873e-05, 'loss': -10.106, 'head_losses': [-10.077, -0.029]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 250, 'n_batches': 3125, 'time': 0.047, 'data_time': 0.003, 'lr': 4.755e-05, 'loss': -10.387, 'head_losses': [-10.251, -0.137]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 300, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.002, 'lr': 3.289e-05, 'loss': -10.056, 'head_losses': [-9.924, -0.133]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 350, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.002, 'lr': 3e-05, 'loss': -9.919, 'head_losses': [-9.782, -0.137]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 400, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.006, 'lr': 3e-05, 'loss': -10.326, 'head_losses': [-10.178, -0.147]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 450, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.005, 'lr': 3e-05, 'loss': -11.246, 'head_losses': [-11.131, -0.115]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 500, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.006, 'lr': 3e-05, 'loss': -10.266, 'head_losses': [-10.168, -0.097]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 550, 'n_batches': 3125, 'time': 0.037, 'data_time': 0.003, 'lr': 3e-05, 'loss': -10.63, 'head_losses': [-10.491, -0.139]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 600, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.004, 'lr': 3e-05, 'loss': -10.6, 'head_losses': [-10.459, -0.141]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 650, 'n_batches': 3125, 'time': 0.022, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.707, 'head_losses': [-10.564, -0.143]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 700, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.664, 'head_losses': [-10.51, -0.154]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 750, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.299, 'head_losses': [-10.142, -0.156]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 800, 'n_batches': 3125, 'time': 0.041, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.092, 'head_losses': [-9.974, -0.118]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 850, 'n_batches': 3125, 'time': 0.041, 'data_time': 0.005, 'lr': 3e-05, 'loss': -10.388, 'head_losses': [-10.315, -0.073]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 900, 'n_batches': 3125, 'time': 0.043, 'data_time': 0.006, 'lr': 3e-05, 'loss': -11.087, 'head_losses': [-10.942, -0.145]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 950, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.002, 'lr': 3e-05, 'loss': -11.229, 'head_losses': [-11.082, -0.147]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1000, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.004, 'lr': 3e-05, 'loss': -10.32, 'head_losses': [-10.183, -0.137]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1050, 'n_batches': 3125, 'time': 0.042, 'data_time': 0.005, 'lr': 3e-05, 'loss': -10.396, 'head_losses': [-10.26, -0.136]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1100, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.002, 'lr': 3e-05, 'loss': -11.133, 'head_losses': [-10.966, -0.167]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1150, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.747, 'head_losses': [-10.583, -0.164]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1200, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.87, 'head_losses': [-10.73, -0.14]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1250, 'n_batches': 3125, 'time': 0.026, 'data_time': 0.006, 'lr': 3e-05, 'loss': -10.705, 'head_losses': [-10.555, -0.15]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1300, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.004, 'lr': 3e-05, 'loss': -11.085, 'head_losses': [-10.912, -0.172]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1350, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.003, 'lr': 3e-05, 'loss': -10.979, 'head_losses': [-10.82, -0.159]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1400, 'n_batches': 3125, 'time': 0.039, 'data_time': 0.004, 'lr': 3e-05, 'loss': -10.509, 'head_losses': [-10.346, -0.163]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1450, 'n_batches': 3125, 'time': 0.028, 'data_time': 0.01, 'lr': 3e-05, 'loss': -10.807, 'head_losses': [-10.666, -0.142]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1500, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.143, 'head_losses': [-10.091, -0.052]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1550, 'n_batches': 3125, 'time': 0.036, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.766, 'head_losses': [-10.612, -0.154]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1600, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.006, 'lr': 2.276e-05, 'loss': -10.511, 'head_losses': [-10.384, -0.127]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1650, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.005, 'lr': 1.574e-05, 'loss': -10.734, 'head_losses': [-10.573, -0.161]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1700, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 1.089e-05, 'loss': -10.506, 'head_losses': [-10.352, -0.154]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1750, 'n_batches': 3125, 'time': 0.038, 'data_time': 0.01, 'lr': 7.54e-06, 'loss': -10.531, 'head_losses': [-10.396, -0.135]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1800, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 5.21e-06, 'loss': -9.933, 'head_losses': [-9.784, -0.149]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1850, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.003, 'lr': 3.61e-06, 'loss': -10.124, 'head_losses': [-9.982, -0.142]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1900, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.003, 'lr': 3e-06, 'loss': -10.288, 'head_losses': [-10.126, -0.163]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1950, 'n_batches': 3125, 'time': 0.037, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.254, 'head_losses': [-10.095, -0.16]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2000, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.003, 'lr': 3e-06, 'loss': -10.61, 'head_losses': [-10.453, -0.157]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2050, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.004, 'lr': 3e-06, 'loss': -10.671, 'head_losses': [-10.494, -0.177]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2100, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.479, 'head_losses': [-10.342, -0.137]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2150, 'n_batches': 3125, 'time': 0.026, 'data_time': 0.007, 'lr': 3e-06, 'loss': -10.878, 'head_losses': [-10.728, -0.15]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2200, 'n_batches': 3125, 'time': 0.043, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.786, 'head_losses': [-10.613, -0.173]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2250, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.803, 'head_losses': [-10.642, -0.161]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2300, 'n_batches': 3125, 'time': 0.039, 'data_time': 0.003, 'lr': 3e-06, 'loss': -10.689, 'head_losses': [-10.544, -0.145]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2350, 'n_batches': 3125, 'time': 0.026, 'data_time': 0.005, 'lr': 3e-06, 'loss': -11.084, 'head_losses': [-10.93, -0.154]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2400, 'n_batches': 3125, 'time': 0.032, 'data_time': 0.003, 'lr': 3e-06, 'loss': -10.204, 'head_losses': [-10.034, -0.169]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2450, 'n_batches': 3125, 'time': 0.029, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.413, 'head_losses': [-10.257, -0.156]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2500, 'n_batches': 3125, 'time': 0.031, 'data_time': 0.004, 'lr': 3e-06, 'loss': -11.217, 'head_losses': [-11.056, -0.161]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2550, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.007, 'lr': 3e-06, 'loss': -10.845, 'head_losses': [-10.679, -0.165]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2600, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.824, 'head_losses': [-10.657, -0.166]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2650, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.265, 'head_losses': [-10.114, -0.152]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2700, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.864, 'head_losses': [-10.726, -0.138]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2750, 'n_batches': 3125, 'time': 0.033, 'data_time': 0.003, 'lr': 3e-06, 'loss': -11.068, 'head_losses': [-10.9, -0.168]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2800, 'n_batches': 3125, 'time': 0.037, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.563, 'head_losses': [-10.403, -0.16]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2850, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 3e-06, 'loss': -10.42, 'head_losses': [-10.257, -0.163]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2900, 'n_batches': 3125, 'time': 0.034, 'data_time': 0.003, 'lr': 3e-06, 'loss': -10.884, 'head_losses': [-10.722, -0.163]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2950, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.005, 'lr': 3e-06, 'loss': -11.055, 'head_losses': [-10.931, -0.125]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 3000, 'n_batches': 3125, 'time': 0.03, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.637, 'head_losses': [-10.473, -0.164]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 3050, 'n_batches': 3125, 'time': 0.027, 'data_time': 0.012, 'lr': 3e-06, 'loss': -10.676, 'head_losses': [-10.519, -0.158]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 3100, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.572, 'head_losses': [-10.531, -0.041]}

INFO:openpifpaf.network.trainer:applying ema

INFO:openpifpaf.network.trainer:{'type': 'train-epoch', 'epoch': 3, 'loss': -10.6664, 'head_losses': [-10.5265, -0.1399], 'time': 110.8, 'n_clipped_grad': 0, 'max_norm': 0.0}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch003

INFO:openpifpaf.network.trainer:{'type': 'val-epoch', 'epoch': 3, 'loss': -10.71402, 'head_losses': [-10.55684, -0.15719], 'time': 17.0}

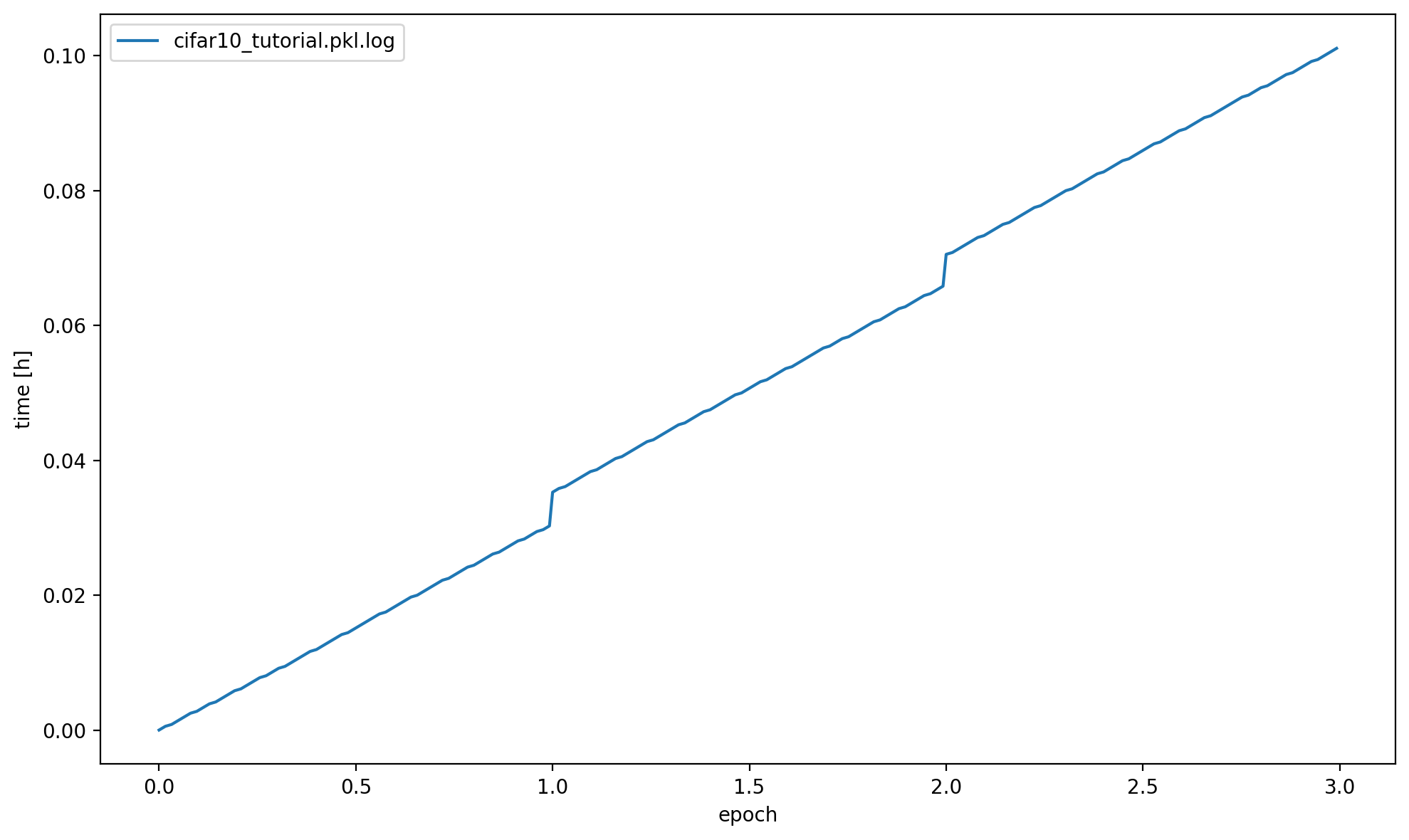

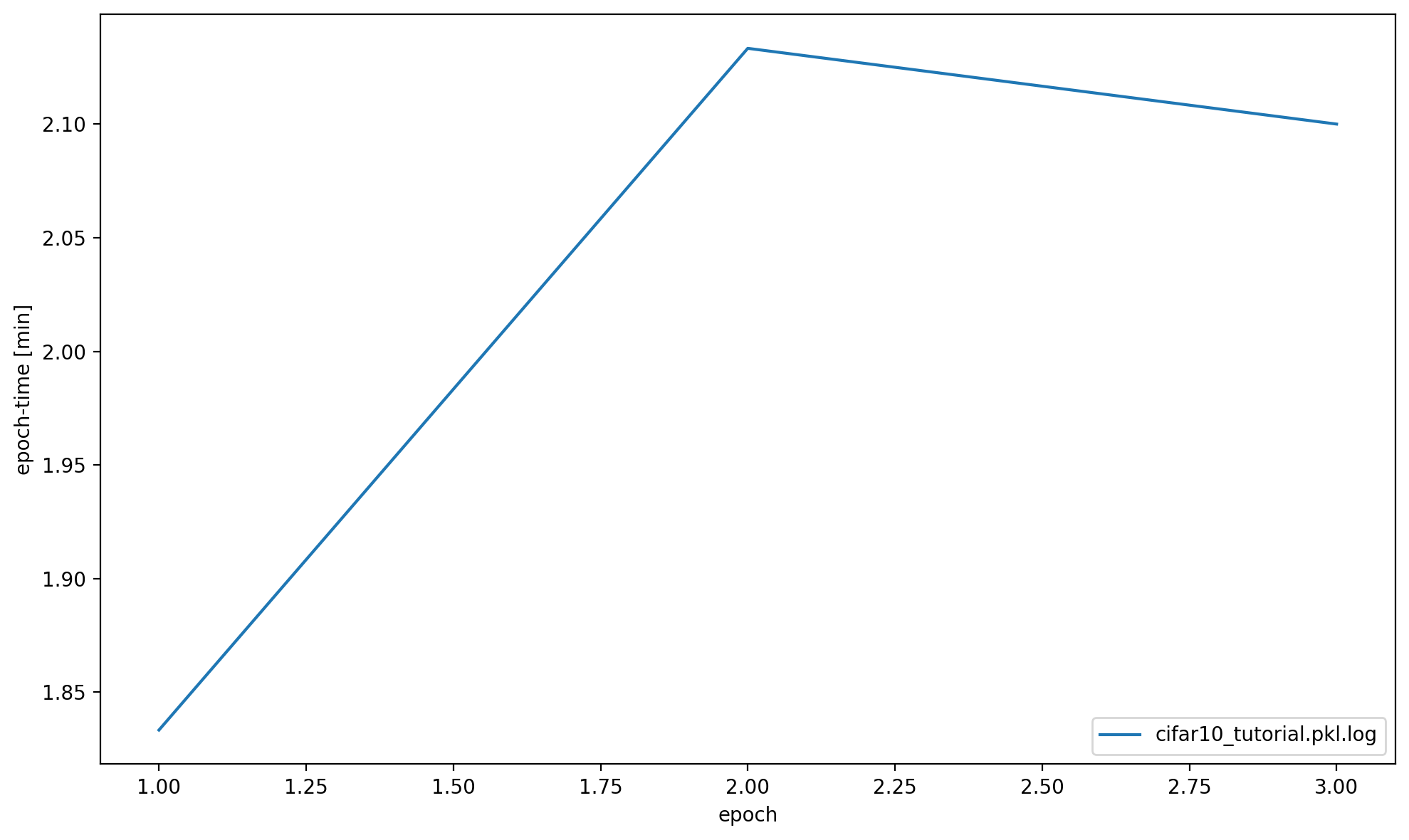

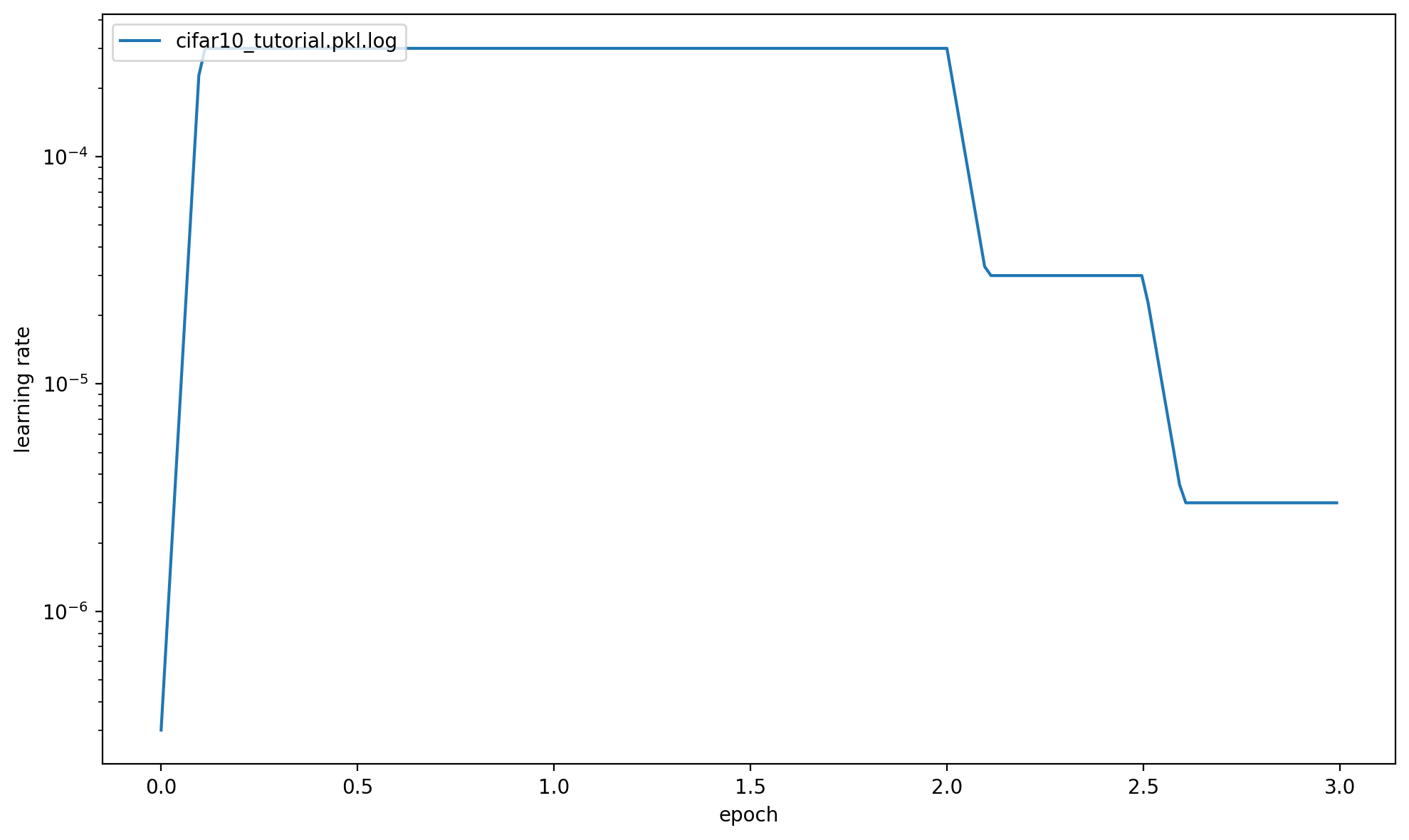

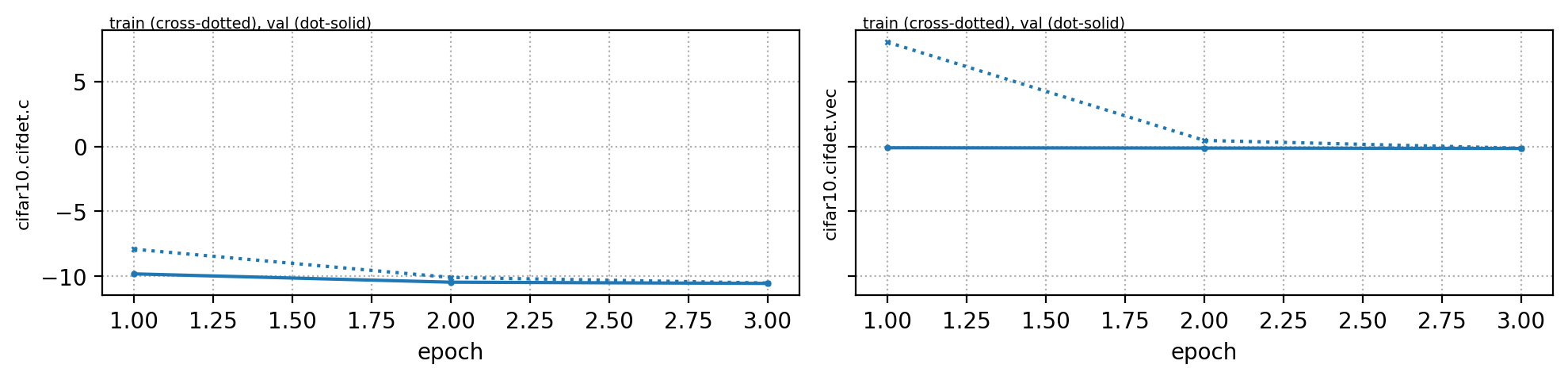

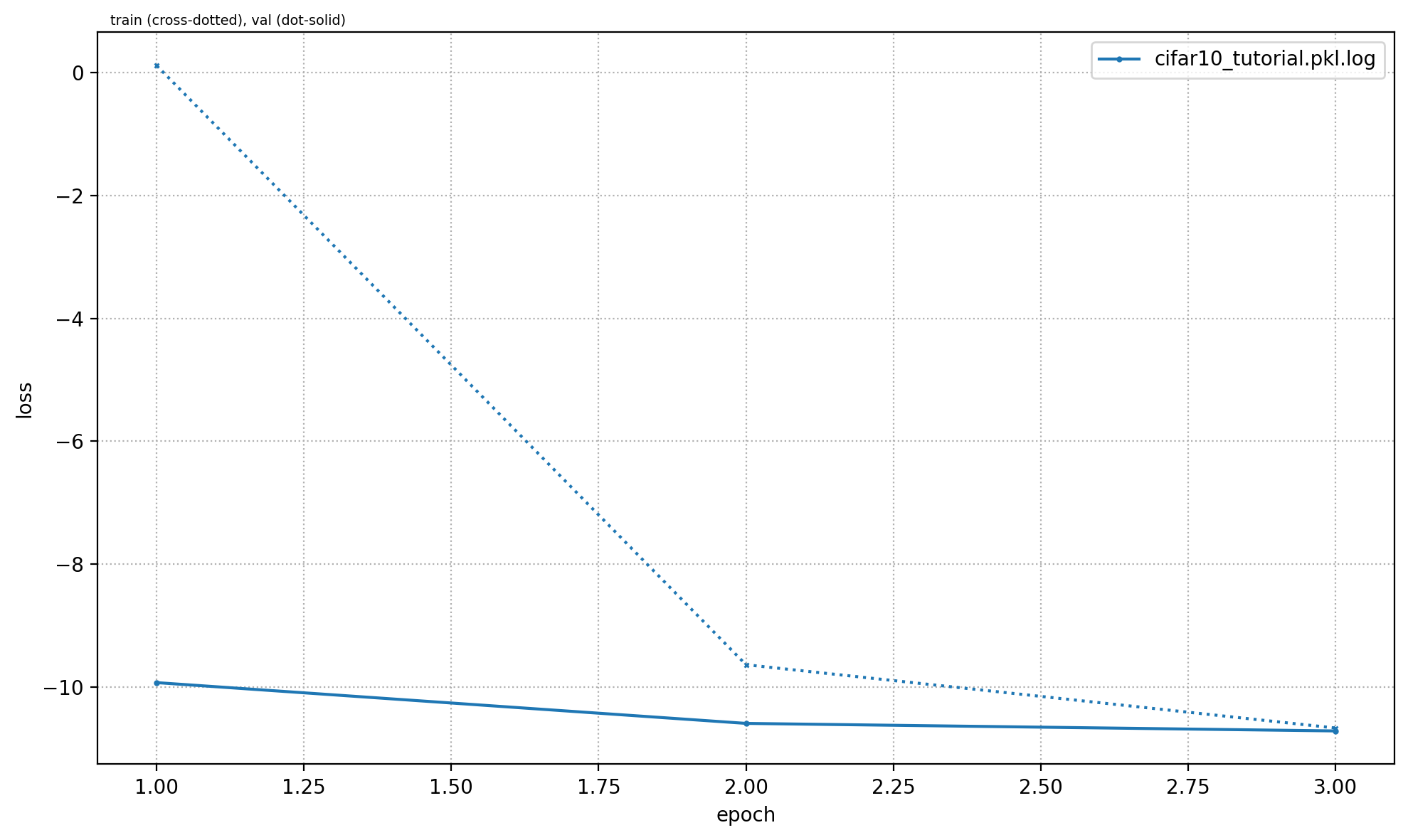

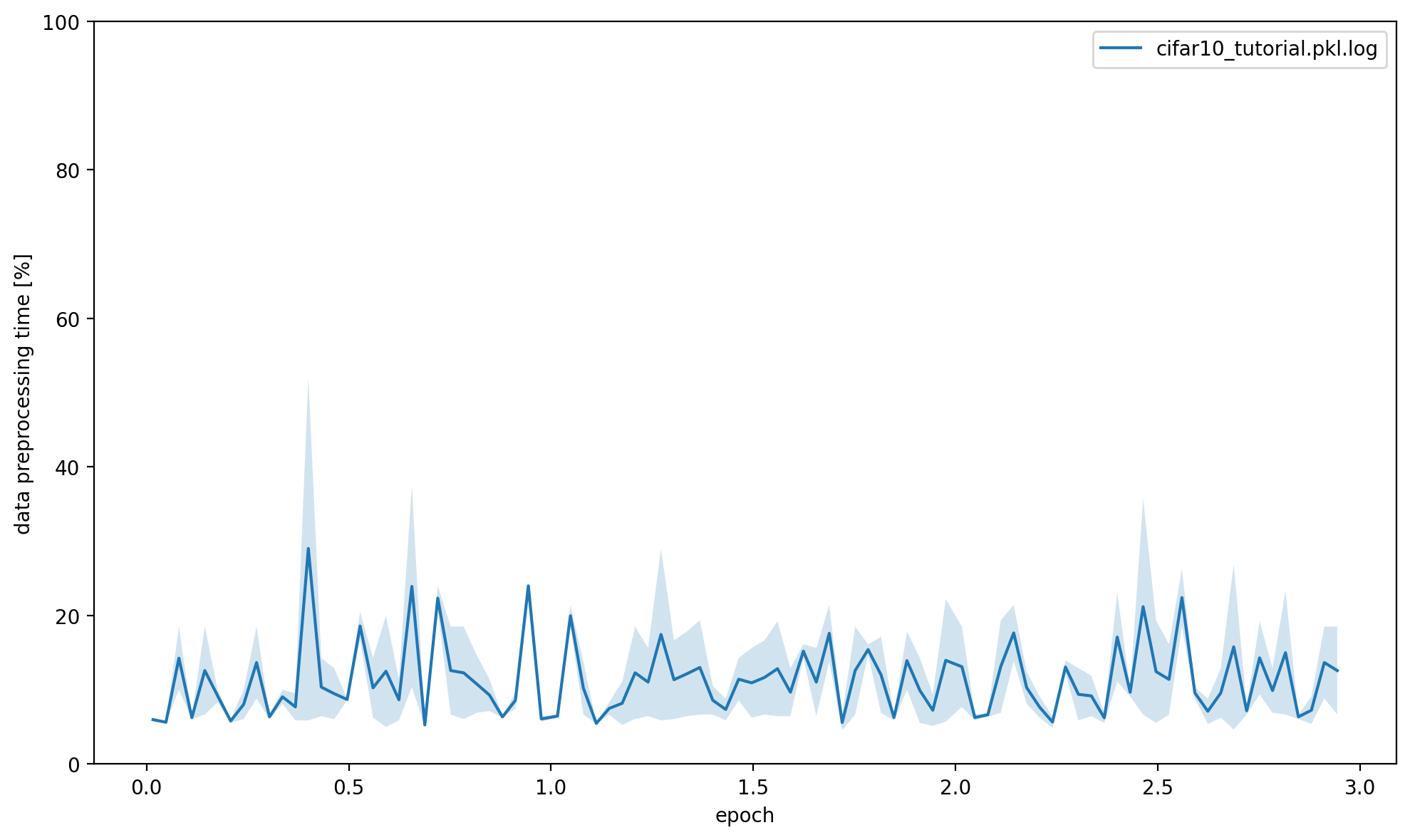

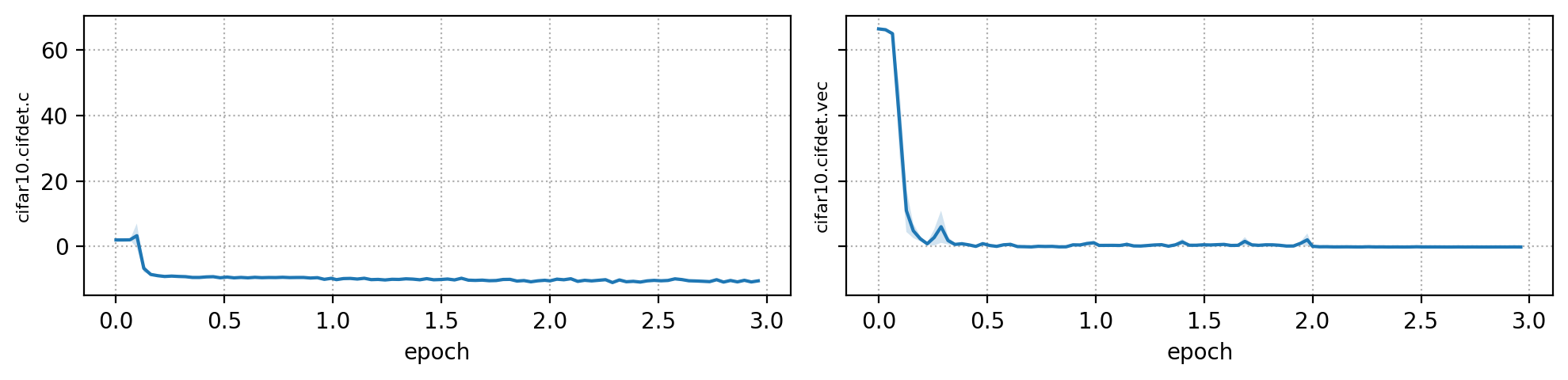

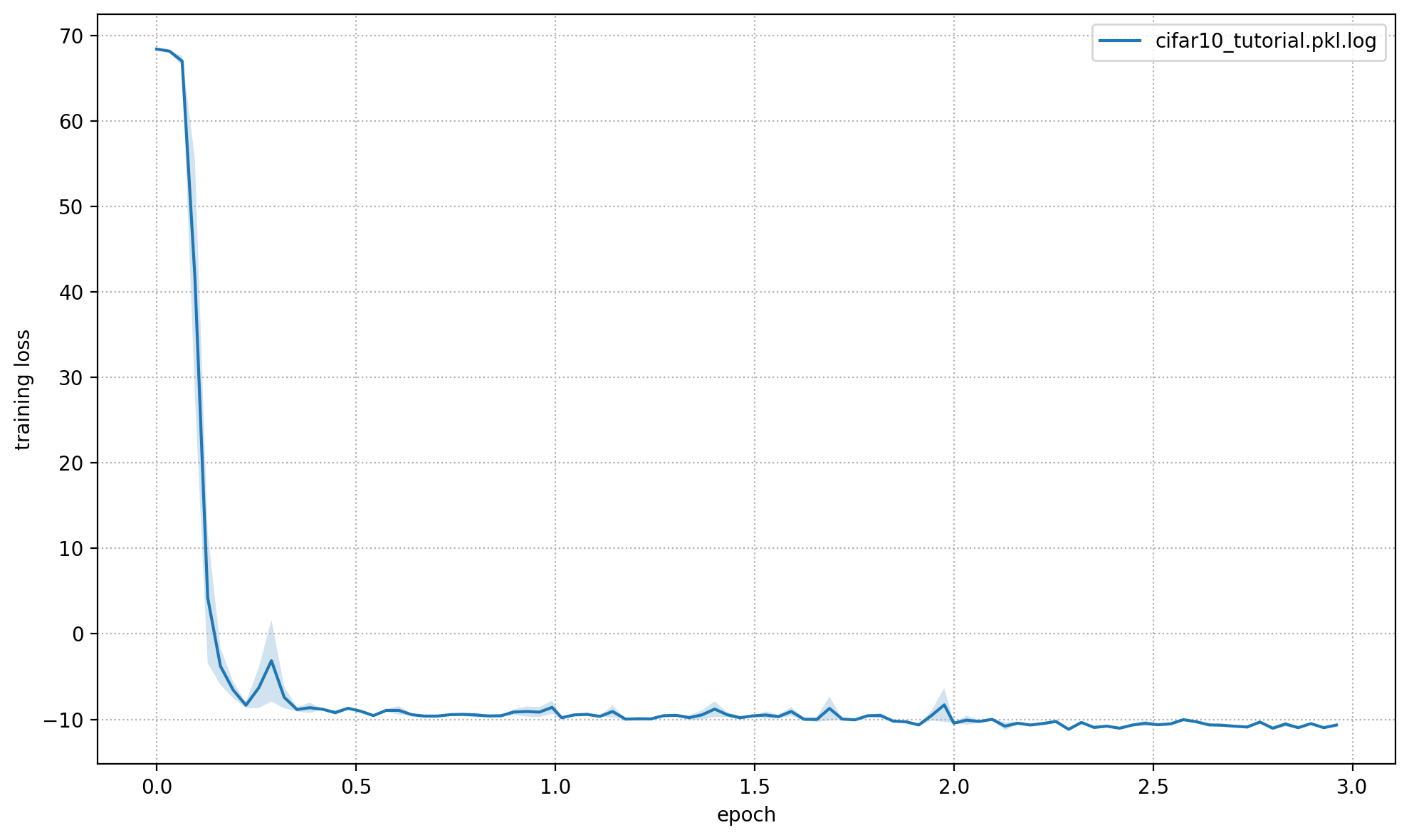

Plot Training Logs#

You can create a set of plots from the command line with python -m openpifpaf.logs cifar10_tutorial.pkl.log. You can also overlay multiple runs. Below we call the plotting code from that command directly to show the output in this notebook.

openpifpaf.logs.Plots(['cifar10_tutorial.pkl.log']).show_all()

{'cifar10_tutorial.pkl.log': ['--dataset=cifar10',

'--basenet=cifar10net',

'--log-interval=50',

'--epochs=3',

'--lr=0.0003',

'--momentum=0.95',

'--batch-size=16',

'--lr-warm-up-epochs=0.1',

'--lr-decay',

'2.0',

'2.5',

'--lr-decay-epochs=0.1',

'--loader-workers=2',

'--output=cifar10_tutorial.pkl']}

cifar10_tutorial.pkl.log: {'message': None, 'levelname': 'INFO', 'name': 'openpifpaf.network.trainer', 'asctime': '2023-02-05 17:48:57,159', 'type': 'train', 'epoch': 2, 'batch': 3100, 'n_batches': 3125, 'time': 0.035, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.572, 'head_losses': [-10.531, -0.041]}

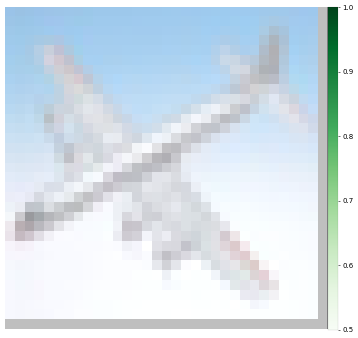

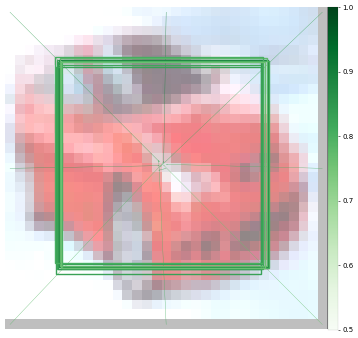

Prediction#

First using CLI:

%%bash

python -m openpifpaf.predict --checkpoint cifar10_tutorial.pkl.epoch003 images/cifar10_*.png --seed-threshold=0.1 --json-output . --quiet

WARNING:openpifpaf.decoder.cifcaf:consistency: decreasing keypoint threshold to seed threshold of 0.100000

%%bash

cat cifar10_*.json

[{"category_id": 1, "category": "plane", "score": 0.405, "bbox": [5.05, 5.06, 20.93, 21.01]}, {"category_id": 9, "category": "ship", "score": 0.386, "bbox": [4.97, 4.94, 21.04, 21.01]}, {"category_id": 3, "category": "bird", "score": 0.307, "bbox": [4.88, 4.92, 21.0, 21.01]}, {"category_id": 5, "category": "deer", "score": 0.248, "bbox": [5.08, 4.79, 20.99, 21.03]}, {"category_id": 4, "category": "cat", "score": 0.227, "bbox": [5.01, 5.09, 20.96, 20.88]}, {"category_id": 6, "category": "dog", "score": 0.183, "bbox": [4.93, 5.08, 21.01, 20.93]}, {"category_id": 10, "category": "truck", "score": 0.177, "bbox": [5.07, 5.05, 21.03, 20.95]}][{"category_id": 2, "category": "car", "score": 0.503, "bbox": [4.74, 5.87, 21.07, 20.97]}, {"category_id": 10, "category": "truck", "score": 0.475, "bbox": [4.88, 5.16, 20.86, 21.13]}, {"category_id": 9, "category": "ship", "score": 0.239, "bbox": [4.82, 5.45, 21.09, 20.88]}, {"category_id": 1, "category": "plane", "score": 0.187, "bbox": [5.04, 5.23, 21.0, 21.09]}][{"category_id": 9, "category": "ship", "score": 0.377, "bbox": [5.17, 4.76, 20.93, 20.97]}, {"category_id": 10, "category": "truck", "score": 0.355, "bbox": [5.04, 4.96, 20.94, 20.88]}, {"category_id": 1, "category": "plane", "score": 0.351, "bbox": [5.02, 5.05, 20.96, 21.0]}, {"category_id": 2, "category": "car", "score": 0.344, "bbox": [5.0, 5.1, 21.01, 21.02]}, {"category_id": 3, "category": "bird", "score": 0.188, "bbox": [5.09, 5.02, 20.9, 21.06]}, {"category_id": 8, "category": "horse", "score": 0.178, "bbox": [5.05, 4.94, 20.96, 20.94]}, {"category_id": 4, "category": "cat", "score": 0.152, "bbox": [5.04, 4.93, 20.96, 21.05]}][{"category_id": 10, "category": "truck", "score": 0.377, "bbox": [5.03, 5.06, 21.08, 20.96]}, {"category_id": 2, "category": "car", "score": 0.342, "bbox": [5.12, 4.94, 21.03, 20.99]}, {"category_id": 8, "category": "horse", "score": 0.323, "bbox": [5.07, 5.06, 20.87, 20.96]}, {"category_id": 1, "category": "plane", "score": 0.293, "bbox": [4.9, 4.97, 21.03, 20.99]}, {"category_id": 9, "category": "ship", "score": 0.283, "bbox": [4.82, 5.1, 20.96, 20.98]}, {"category_id": 4, "category": "cat", "score": 0.276, "bbox": [5.0, 5.03, 21.0, 20.98]}, {"category_id": 3, "category": "bird", "score": 0.239, "bbox": [4.88, 4.95, 20.88, 20.97]}, {"category_id": 6, "category": "dog", "score": 0.234, "bbox": [5.15, 4.96, 20.92, 21.0]}, {"category_id": 5, "category": "deer", "score": 0.22, "bbox": [4.83, 4.86, 20.96, 20.95]}, {"category_id": 7, "category": "frog", "score": 0.207, "bbox": [5.1, 5.07, 20.96, 20.94]}]

Using API:

net_cpu, _ = openpifpaf.network.Factory(checkpoint='cifar10_tutorial.pkl.epoch003').factory()

preprocess = openpifpaf.transforms.Compose([

openpifpaf.transforms.NormalizeAnnotations(),

openpifpaf.transforms.CenterPadTight(16),

openpifpaf.transforms.EVAL_TRANSFORM,

])

openpifpaf.decoder.utils.CifDetSeeds.set_threshold(0.3)

decode = openpifpaf.decoder.factory([hn.meta for hn in net_cpu.head_nets])

data = openpifpaf.datasets.ImageList([

'images/cifar10_airplane4.png',

'images/cifar10_automobile10.png',

'images/cifar10_ship7.png',

'images/cifar10_truck8.png',

], preprocess=preprocess)

for image, _, meta in data:

predictions = decode.batch(net_cpu, image.unsqueeze(0))[0]

print(['{} {:.0%}'.format(pred.category, pred.score) for pred in predictions])

['plane 41%', 'ship 39%', 'bird 31%']

['car 50%', 'truck 48%']

['ship 38%', 'truck 35%', 'plane 35%', 'car 34%']

['truck 38%', 'car 34%', 'horse 32%']

Evaluation#

I selected the above images, because their category is clear to me. There are images in cifar10 where it is more difficult to tell what the category is and so it is probably also more difficult for a neural network.

Therefore, we should run a proper quantitative evaluation with openpifpaf.eval. It stores its output as a json file, so we print that afterwards.

%%bash

python -m openpifpaf.eval --checkpoint cifar10_tutorial.pkl.epoch003 --dataset=cifar10 --seed-threshold=0.1 --instance-threshold=0.1 --quiet

WARNING:openpifpaf.decoder.cifcaf:consistency: decreasing keypoint threshold to seed threshold of 0.100000

cifar10_tutorial.pkl.epoch003.eval-cifar10.stats.json not found. Processing: cifar10_tutorial.pkl.epoch003

[INFO] Register count_convNd() for <class 'torch.nn.modules.conv.Conv2d'>.

%%bash

python -m json.tool cifar10_tutorial.pkl.epoch003.eval-cifar10.stats.json

{

"text_labels": [

"total",

"plane",

"car",

"bird",

"cat",

"deer",

"dog",

"frog",

"horse",

"ship",

"truck"

],

"stats": [

0.3887,

0.456,

0.611,

0.114,

0.238,

0.406,

0.394,

0.32,

0.412,

0.497,

0.439

],

"args": [

"/opt/hostedtoolcache/Python/3.8.16/x64/lib/python3.8/site-packages/openpifpaf/eval.py",

"--checkpoint",

"cifar10_tutorial.pkl.epoch003",

"--dataset=cifar10",

"--seed-threshold=0.1",

"--instance-threshold=0.1",

"--quiet"

],

"version": "0.13.11",

"dataset": "cifar10",

"total_time": 45.82286804199998,

"checkpoint": "cifar10_tutorial.pkl.epoch003",

"count_ops": [

421736880.0,

105180.0

],

"file_size": 437347,

"n_images": 10000,

"decoder_time": 9.482721551001077,

"nn_time": 20.26747093601034

}

We see that some categories like “plane”, “car” and “ship” are learned quickly whereas as others are learned poorly (e.g. “bird”). The poor performance is not surprising as we trained our network for a few epochs only.