Custom Dataset

On this page

Custom Dataset#

Overview#

In this section of the guide, we will see how to train and evaluate OpenPifPaf on a custom dataset. OpenPifPaf is based on the concept of Plugin architecture pattern, and the overall system is composed of a core component and auxiliary plug-in modules. To train a model on a custom dataset, you don’t need to change the core system, only to create a small plugin for it. This tutorial will go through the steps required to create a new plugin for a custom dataset.

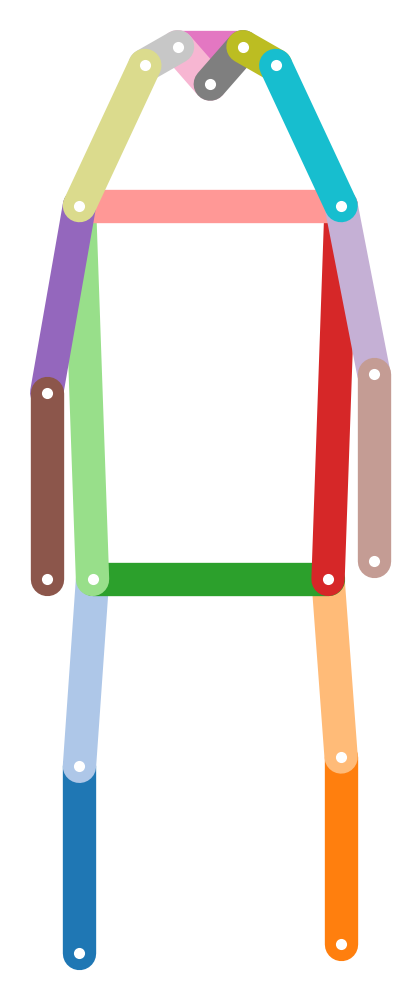

Let’s go through the steps of implementing a 2D pose estimator for vehicles, as a case study. If you are interested in how this specific plugin works, please check its guide section. We suggest to create and debug your own plugin copying a pre-existing plugin, changing its name, and adapting its files to your needs. Below, a description of the structure of the plugin to give you some intuition of what you will need to change.

Plugin structure#

1. Data Module#

This module handles the interface of your custom dataset with the core system and it is the main component of the plugin. For the ApolloCar3D Dataset, we created a module called apollo_kp.py containing the class ApolloKp which inherits from the DataModule class.

The base class to inherit from has the following structure:

- class openpifpaf.datasets.DataModule

Base class to extend OpenPifPaf with custom data.

This class gives you all the handles to train OpenPifPaf on a new dataset. Create a new class that inherits from this to handle a new datasets.

Define the PifPaf heads you would like to train. For example, CIF (Composite Intensity Fields) to detect keypoints, and CAF (Composite Association Fields) to associate joints

Add class variables, such as annotations, training/validation image paths.

- classmethod cli(parser: argparse.ArgumentParser)

Command line interface (CLI) to extend argument parser for your custom dataset.

Make sure to use unique CLI arguments for your dataset. For clarity, we suggest to start every CLI argument with the name of your new dataset, i.e. --<dataset_name>-train-annotations.

All PifPaf commands will still work. E.g. to load a model, there is no need to implement the command --checkpoint

- classmethod configure(args: argparse.Namespace)

Take the parsed argument parser output and configure class variables.

- metrics() List[openpifpaf.metric.base.Base]

Define a list of metrics to be used for eval.

- train_loader() torch.utils.data.dataloader.DataLoader

Loader of the training dataset.

A Coco Data loader is already available, or a custom one can be created and called here. To modify preprocessing steps of your images (for example scaling image during training):

chain them using torchvision.transforms.Compose(transforms)

pass them to the preprocessing argument of the dataloader

- val_loader() torch.utils.data.dataloader.DataLoader

Loader of the validation dataset.

The augmentation and preprocessing should be the same as for train_loader. The only difference is the set of data. This allows to inspect the train/val curves for overfitting.

As in the train_loader, the annotations should be encoded fields so that the loss function can be computed.

- eval_loader() torch.utils.data.dataloader.DataLoader

Loader of the evaluation dataset.

For local runs, it is common that the validation dataset is also the evaluation dataset. This is then changed to test datasets (without ground truth) to produce predictions for submissions to a competition server that holds the private ground truth.

This loader shouldn’t have any data augmentation. The images should be as close as possible to the real application. The annotations should be the ground truth annotations similarly to what the output of the decoder is expected to be.

Now that you have a general view of the structure of a data module, we suggest you to refer to the implementation of the ApolloKp class. You can get started by copying and modifying this class according to your needs.

2. Plugin Registration#

For the core system to recognize the new plugin you need to create a __init__.py file specifying:

Name Convention: include all the plugins file into a folder named openpifpaf_<plugin_name>. Only folders, which names start with openpifpaf_ are recognized

Registration: Inside the folder, create an init.py file and add to the list of existing plugins the datamodule that we have just created (ApolloKp). In this case, the name apollo represents the name of the dataset.

def register():

openpifpaf.DATAMODULES['apollo'] = ApolloKp

3. Constants#

Create a module constants.py containing all the constants needed to define the 2D keypoints of vehicles. The most important are:

Names of the keypoints, as a list of strings. In our plugin this is called CAR_KEYPOINTS

Skeleton: the connections between the keypooints, as a list of lists of two elements indicating the indeces of the starting and ending connections. In our plugin this is called CAR_SKELETON

Sigmas: the size of the area to compute the object keypoint similarity (OKS), if you wish to use average precision (AP) as a metric.

Score weights:the weights to compute the overall score of an object (e.g. car or person). When computing the overall score the highest weights will be assigned to the most confident joints.

Categories of the keypoints. In this case, the only category is car.

Standard pose of the keypoints, to visualize the connections between the keypoints and as an argument for the head network.

Horizontal flip equivalents, if you use horizontal flipping as augmentation technique, you will need to define the corresponding left and right and keypoints as a dictionary. E.g. left_ear –> right_ear. In our plugin this is called HFLIP

In addition to the constants, the module contains two functions to draw the skeleton and save it as an image. The functions are only for debugging and can usually be used as they are, only changing the arguments with the new constants. For additional information, refer to the file constants.py.

4. Data Loading#

If you are using COCO-style annotations, there is no need to create a datalader to load images and annotations. A default CocoLoader is already available to be called inside the data module ApolloKp

If you wish to load different annotations, you can either write your own dataloader, or you can transform your annotations to COCO style .json files. In this plugin, we first convert ApolloCar3D annotations into COCO style .json files and then load them as standard annotations.

5. Annotations format#

This step transforms custom annotations, in this case from ApolloCar3D, into COCO-style annotations. Below we describe how to populate a json file using COCO-style format. For the full working example, check the module apollo_to_coco.py inside the plugin.

def initiate_json(self):

"""

Initiate json file: one for training phase and another one for validation.

"""

self.json_file["info"] = dict(url="https://github.com/openpifpaf/openpifpaf",

date_created=time.strftime("%a, %d %b %Y %H:%M:%S +0000", time.localtime()),

description="Conversion of ApolloCar3D dataset into MS-COCO format")

self.json_file["categories"] = [dict(name='', # Category name

id=1, # Id of category

skeleton=[], # Skeleton connections (check constants.py)

supercategory='', # Same as category if no supercategory

keypoints=[])] # Keypoint names

self.json_file["images"] = [] # Empty for initialization

self.json_file["annotations"] = [] # Empty for initialization

def process_image(json_file):

"""

Update image field in json file

"""

# ------------------

# Add here your code

# -------------------

json_file["images"].append({

'coco_url': "unknown",

'file_name': '', # Image name

'id': 0, # Image id

'license': 1, # License type

'date_captured': "unknown",

'width': 0, # Image width (pixels)

'height': 0}) # Image height (pixels)

def process_annotation(json_file):

"""

Process and include in the json file a single annotation (instance) from a given image

"""

# ------------------

# Add here your code

# -------------------

json_file["annotations"].append({

'image_id': 0, # Image id

'category_id': 1, # Id of the category (like car or person)

'iscrowd': 0, # 1 to mask crowd regions, 0 if the annotation is not a crowd annotation

'id': 0, # Id of the annotations

'area': 0, # Bounding box area of the annotation (width*height)

'bbox': [], # Bounding box coordinates (x0, y0, width, heigth), where x0, y0 are the left corner

'num_keypoints': 0, # number of keypoints

'keypoints': [], # Flattened list of keypoints [x, y, visibility, x, y, visibility, .. ]

'segmentation': []}) # To add a segmentation of the annotation, empty otherwise

Training#

We have seen all the elements needed to create your own plugin on a custom dataset. To train the dataset, all OpenPifPaf commands are still valid. There are only two differences:

Specify the dataset name in the training command. In this case, we have called our dataset apollo during the registration phase, therefore we will have

--dataset=apollo.Include the commands we have created in the data module for this specific dataset, for example

--apollo-square-edgeto define the size of the training crops.

A training command may look like this:

python3 -m openpifpaf.train --dataset apollo \

--apollo-square-edge=769 \

--basenet=shufflenetv2k16 --lr=0.00002 --momentum=0.95 --b-scale=5.0 \

--epochs=300 --lr-decay 160 260 --lr-decay-epochs=10 --weight-decay=1e-5 \

--weight-decay=1e-5 --val-interval 10 --loader-workers 16 --apollo-upsample 2 \

--apollo-bmin 2 --batch-size 8

Evaluation#

Evaluation on the COCO metric is supported by pifpaf and a simple evaluation command may look like this:

python3 -m openpifpaf.eval --dataset=apollo --checkpoint <path of the model>

To evaluate on custom metrics, we would need to define a new metric and add it in the list of metrics, inside the data module. In our case, we have a a DataModule class called ApolloKp, and its function metrics returns the list of metrics to run. Each metric is defined as a class that inherits from openpifpaf.metric.base.Base

For more information, please check how we implemented a simple metric for the ApolloCar3D dataset called MeanPixelError, that calculate mean pixel error and detection rate for a given image.

Prediction#

To run your model trained on a different dataset, you simply need to run the standard OpenPifPaf command specifying your model. A prediction command looks like this:

python3 -m openpifpaf.predict --checkpoint <model path>

All the command line options are still valid, check them with:

python3 -m openpifpaf.predict --help

Final remarks#

We hope you’ll find this guide useful to create your own plugin. For more information check the guide section for the ApolloCar3D plugin.

Please keep us posted on issues you encounter (using the issue section on GitHub) and especially on your successes! We will be more than happy to add your plugin to the list of OpenPifPaf related projects.

Finally, if you find OpenPifPaf useful for your research, we would be happy if you cite us!